Simplify your streaming data flows with low-code Kafka streams

Moving to Kafka stream processing from batch is a learning curve that feels like running into a brick wall. Why not use skills you already have, such as SQL?

Kafka stream processing doesn't have to bite.

Are rekeying, exactly-once semantics and exception handling giving you a headache? If Kafka streaming applications can be frustrating to build, they are more challenging to scale, troubleshoot and carry to production.

Building & deploying Kafka stream processing applications doesn’t have to be so difficult.

And the less time you need to spend learning the fundamentals of Kafka, the more time you can spend solving your core business challenges.

What are low-code Kafka streams?

How do they help?

Lower skills required

Build & deploy streaming applications without the need to learn Kafka streams

Speed up App Delivery

Self-service deployment framework of streaming apps on Kubernetes

Less tech debt

Applications defined and managed as configuration & deployed on your infrastructure

“Lenses not only helps developers to understand which data is there and how the data is represented, but provides a feedback mechanism on the schema itself. It’s a big part of our low-code app development process.”

Anders Eriksson, Data Engineer - Avanza

Can we develop on Kafka without understanding the nuances of stream processing?

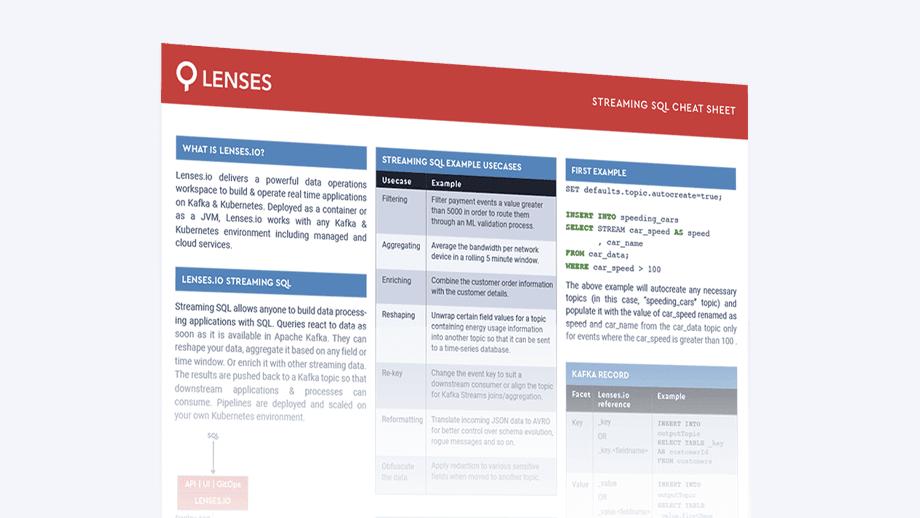

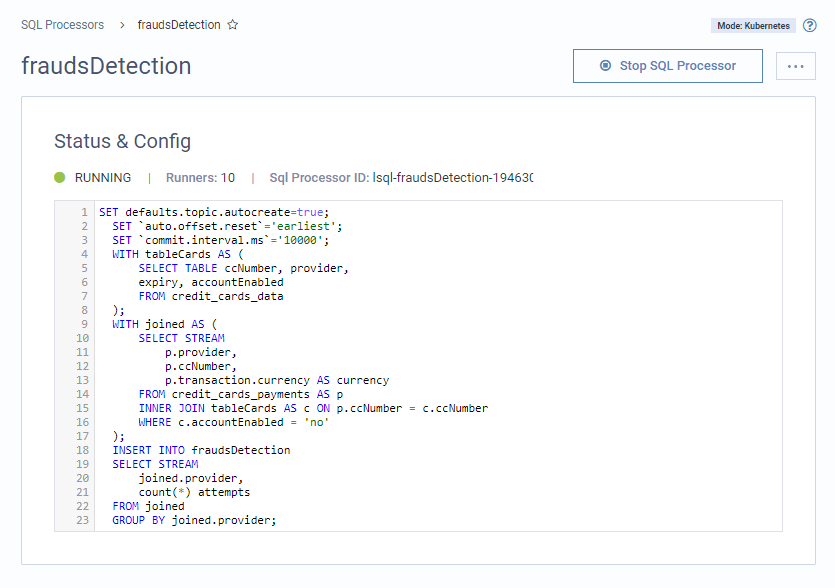

Filter, aggregate, transform and reshape data with streaming SQL, deploying over your existing Kubernetes or Connect infrastructure.

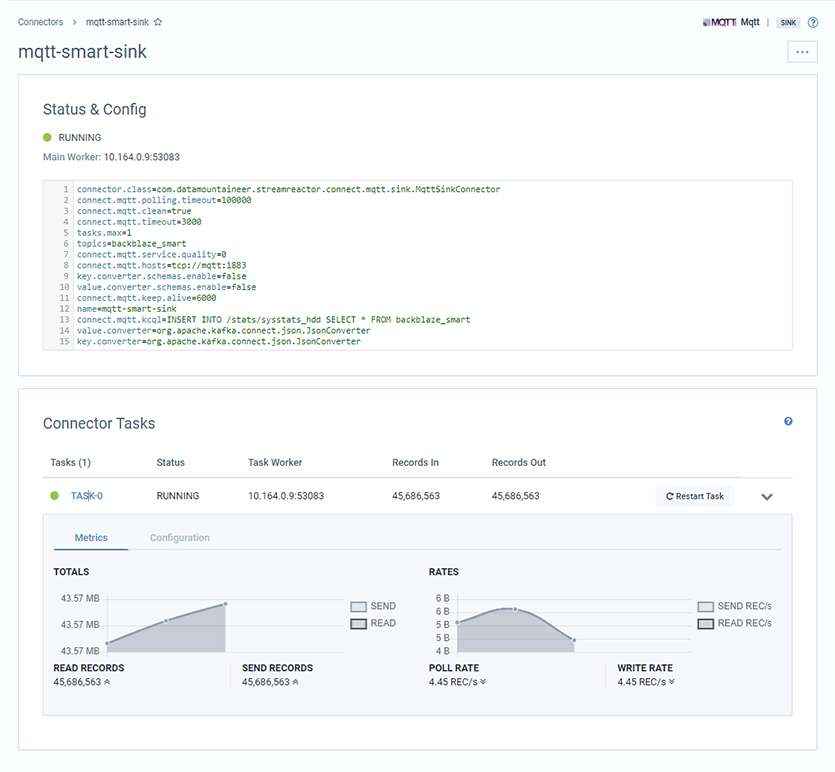

How can we be sure that our Kafka Connect connectors are running correctly?

Deploy, manage & monitor your Kafka connect connectors all from a UI and with error handling and alerting.

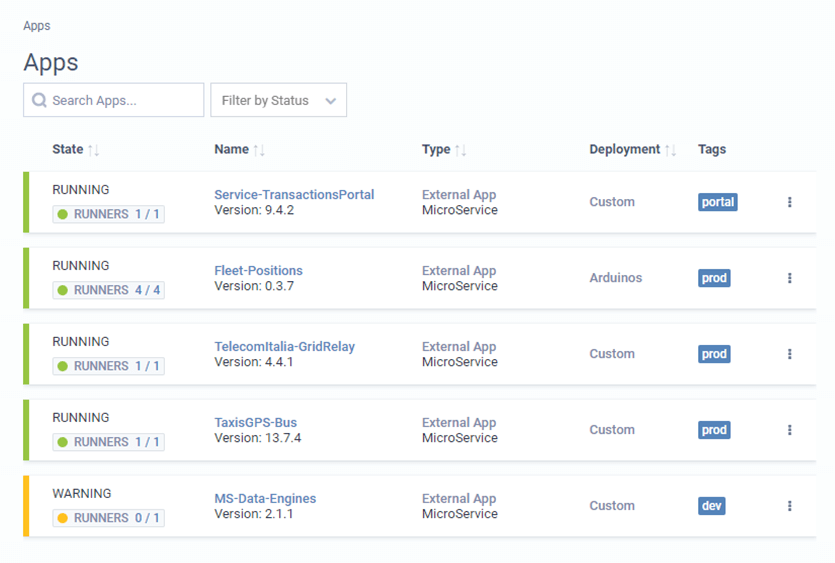

Our streaming application landscape is a wild west. How can we know who has deployed what?

Document and tag applications across different product teams, frameworks and deployment pipelines.

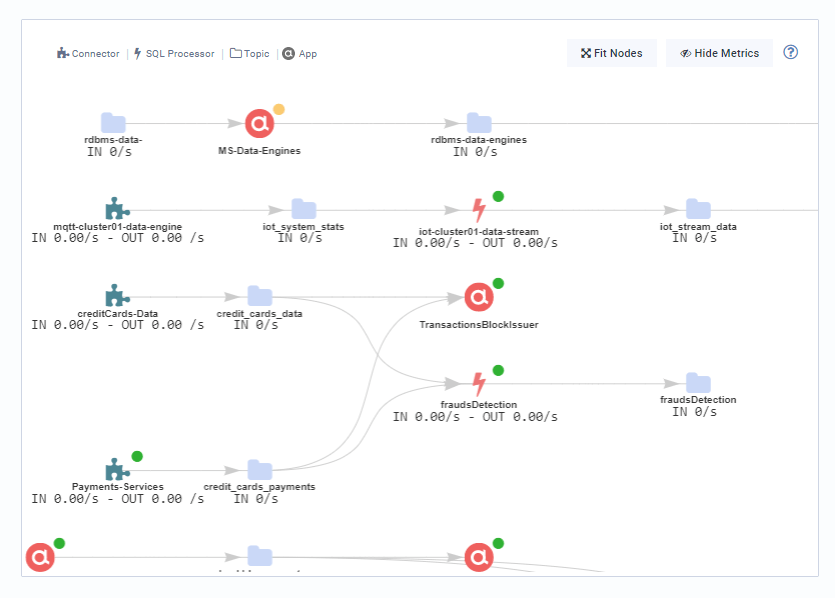

Can I see how my Kafka streaming applications are connected?

The real-time application topology provides a data-centric, google-maps style view of the dependencies between different apps and flows.

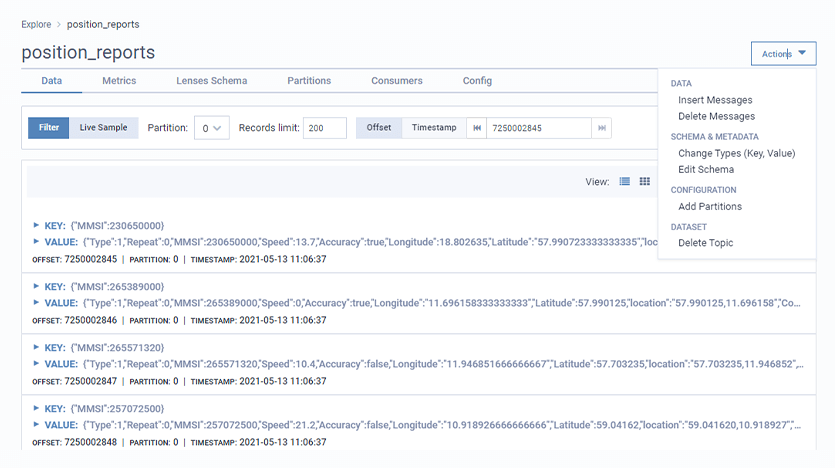

How can I explore or inject data in a Kafka topic to debug my application?

Use a secure UI or API to produce events into a Kafka to test your event-driven application. Works across all serializations.

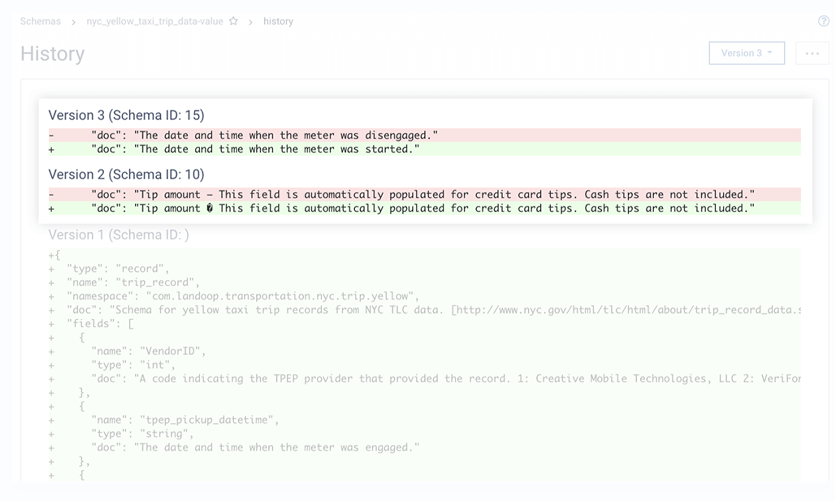

How can we create, evolve and verify schemas?

Manage and evolve schemas held in your 3rd party schema registry such as Confluent or Cloudera, protected by RBAC.

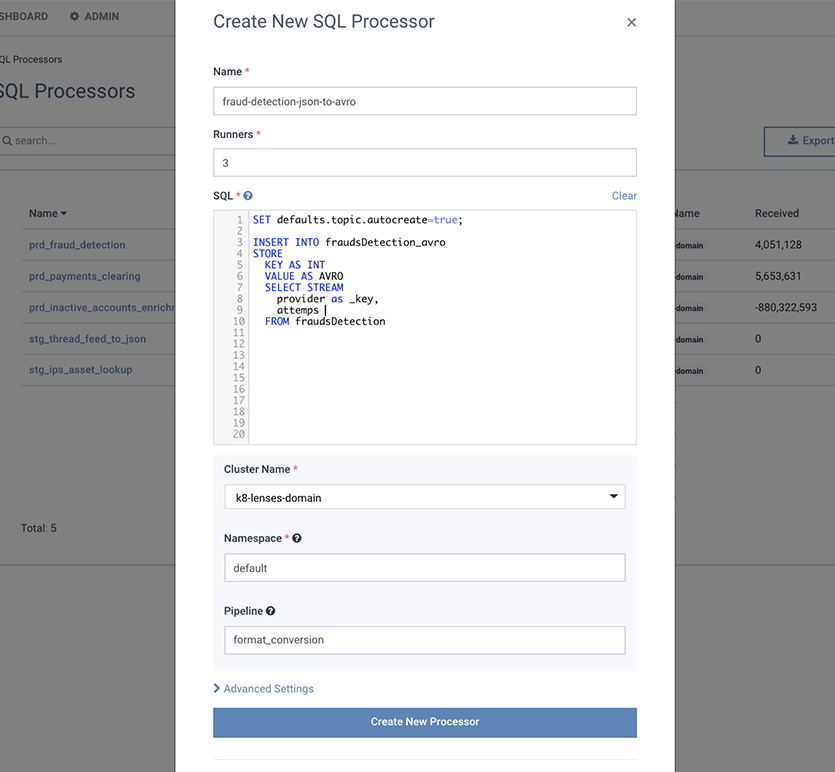

We need to integrate and convert data from legacy sources in formats such as JSON to AVRO

Deploy data transformation workloads defined as SQL to convert data such as JSON, XML or CSV to AVRO or other formats

What are the components of low-code Kafka streams?

Streaming SQL

Snapshot SQL

Application

Deployment

Application

Monitoring

Schema

Management

ACL

Management

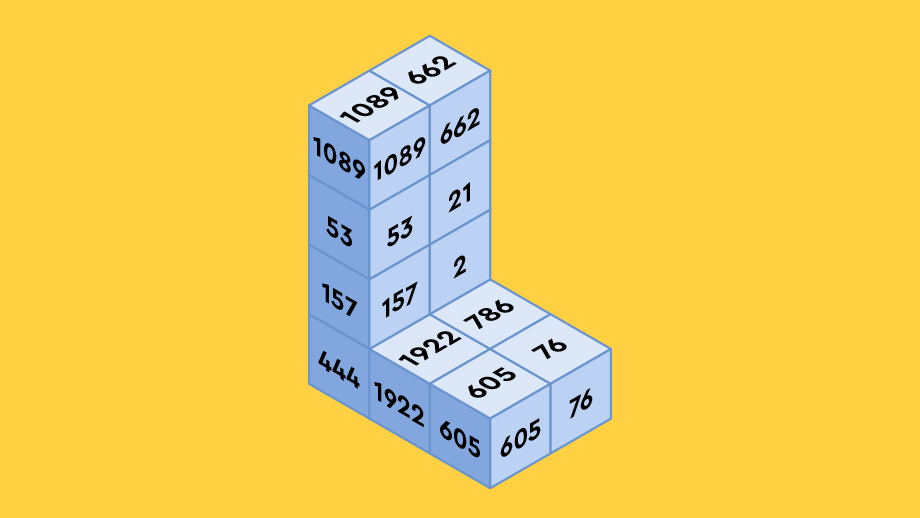

Case Study

This Swiss bank takes minutes to build and deploy Kafka stream processing applications

This highly regulated financial services provider used Lenses to help teams deploy on Kafka without constraints, speeding up time-to-market of streaming apps by 10x and sending 600k targeted communications to end-customers.

Video

Building a Cloud Data Platform 0 to 100 in Under 60 Minutes

- How to build a cloud data platform from scratch

- How to build and deploy a Kafka data stream

- How to add observability & data governance to the platform