Delivering customer apps 10x faster using Apache Kafka

How did a highly regulated Swiss payment provider move an event-driven marketing platform into production in under a year?

How did a highly regulated Swiss payment provider move an event-driven marketing platform into production in under a year?

In one year.

of streaming applications.

sent in strategic marketing campaigns via Kafka.

Viseca is one of the leading Swiss cashless payment providers, with over two million customers. But the race is on for them to find new revenue streams.

This is the story of how the Viseca team channelled the power of Apache Kafka across customer initiatives in just a year, using Lenses.io to deliver distributed data and streaming applications 10x faster.

Financial institutions such as Viseca are built on foundations of trust. But with the arrival of new fintech challengers, there’s a need for traditional service providers to take that long-standing trust and turn it into instant, personalized customer service.

Enter their greatest asset: data. When paired with the right technology, Viseca has the chance to go beyond what their customers expect by turning analytics insights into well-loved, well-timed experiences.

From delivering mail voucher rewards on a customer’s birthday to instantly addressing service enquiries via mobile app, rewriting Viseca’s value in a real-time world requires a vision, infrastructure and delivery model for real-time data.

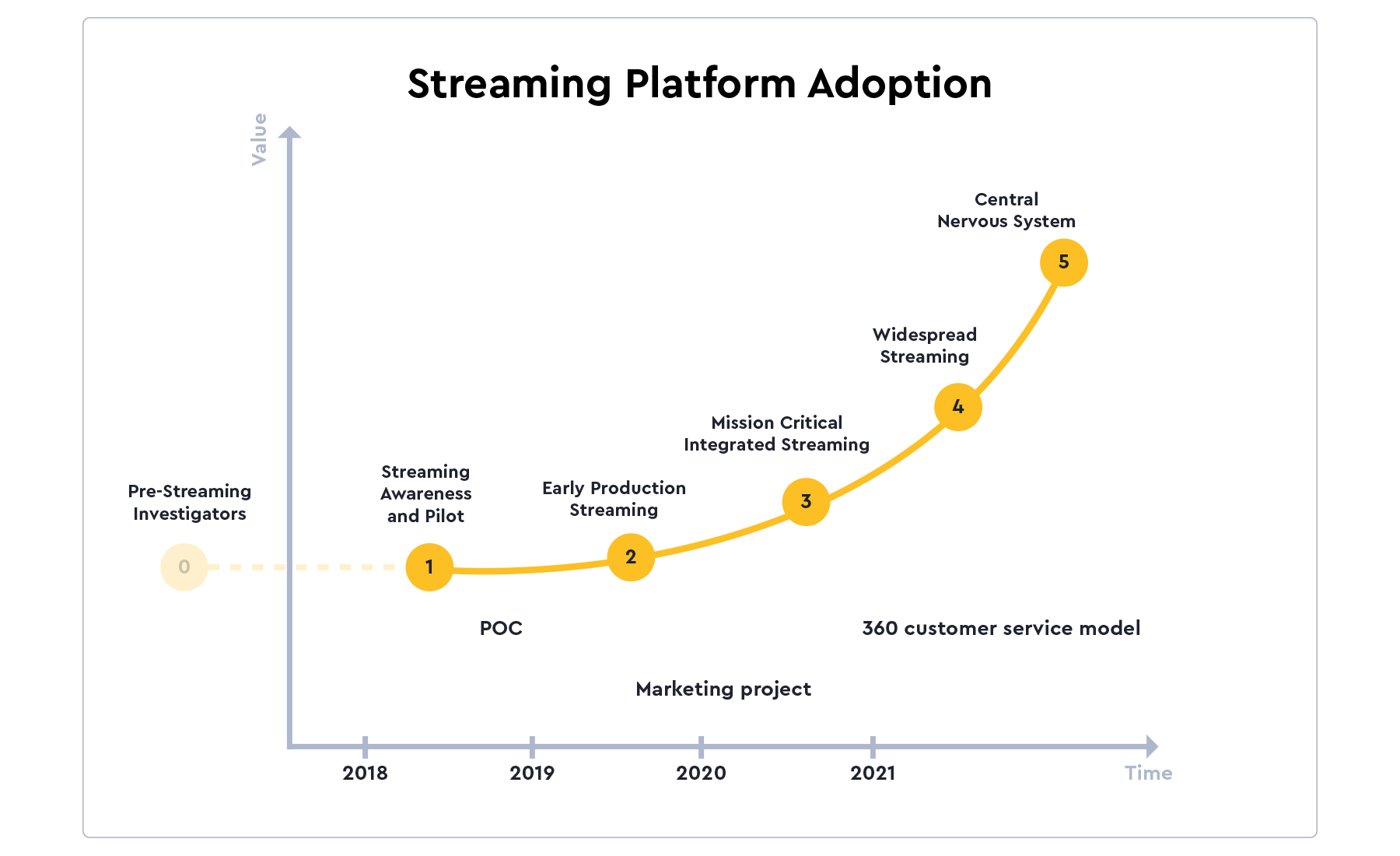

This was why Vladimiro Borsi (Vladi to his colleagues), IT Enterprise Architect at Viseca, began scoping a new Streaming Data Platform.

Although this platform would be designed to support their marketing initiatives, it would also be the first step towards Viseca running their entire business in real-time.

It was clear for Vladi that the solution design would need to go beyond integrating 15+ data systems that form part of their marketing applications - including CRM, direct mail, mobile app, email, website and others.

It needed to support a continuous flow of events generated by applications across the entire business and meet Viseca’s strict corporate governance requirements.

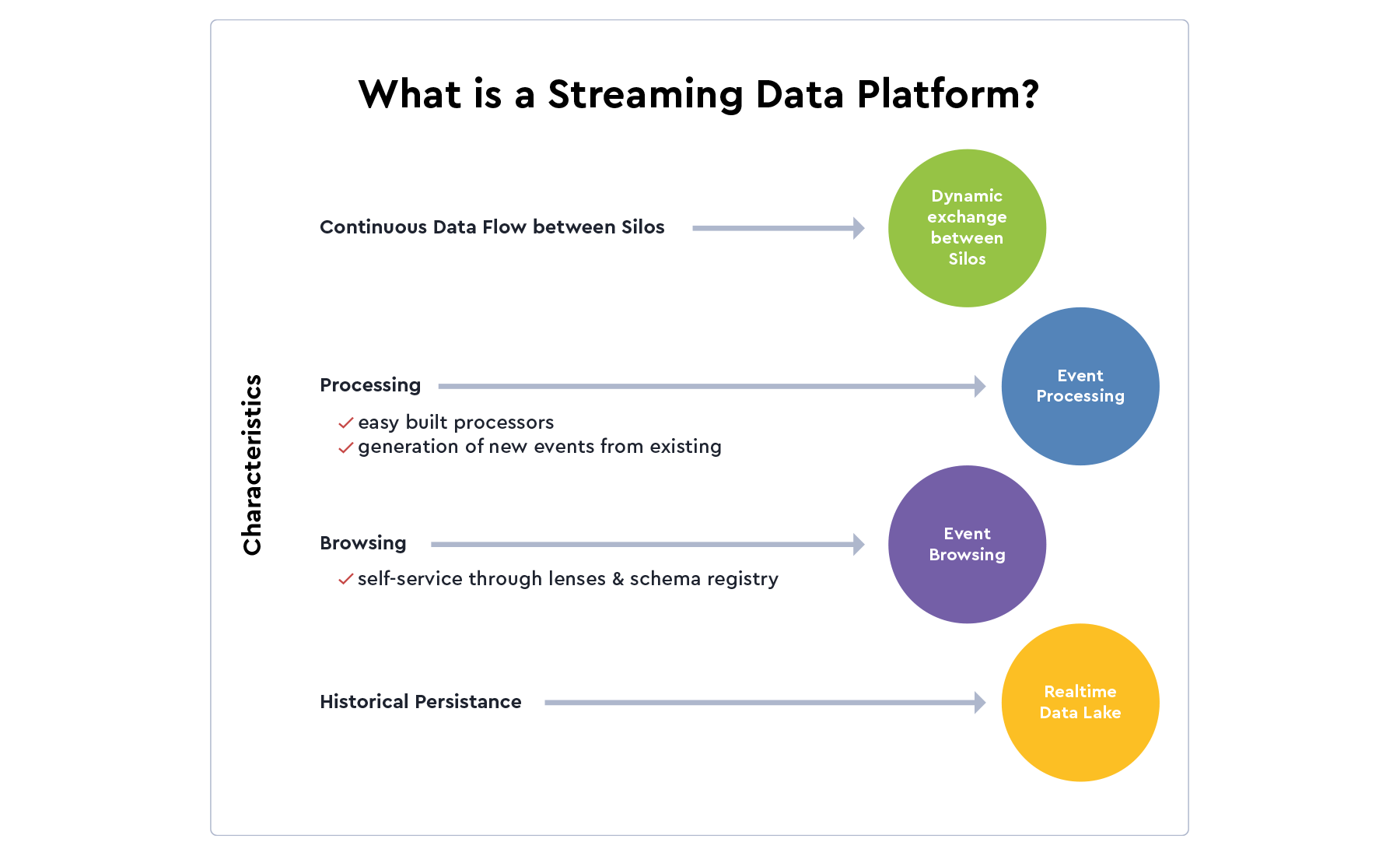

Vladi and his team mapped out a scalable event-based architecture to support and sculpt a Streaming Data Platform based on the following principles:

1. Design data experiences for all

Supported by a team of governance specialists and technologists, Vladi worked with Lenses.io to provide monitoring, exploration and governance of real-time data. This enabled Viseca to simplify the use of Open-Source components such as Apache Kafka, and yet design a platform for business users.

“The Lenses.io team is experienced in supporting enterprise environments for financial institutions. Lenses is agnostic towards key Open-Source technologies, so we were confident the software would govern our new event data platform - which was confirmed with great results.”

Vladimiro Borsi, Enterprise IT Architect

2. Meet data regulations

Fulfilling strict governance and compliance requirements is a baseline for this Swiss financial institution.

“I believe the biggest value of Lenses.io is in governance support, at operational process and information security level, which makes it an ideal solution for enterprises for whom scale is a matter of serious attention.”

Dario Carnelli, Governance Expert (Isaca Certified)

3. Move with customers

Keeping up with the real-time requirements and movements of 2+ million customers is a strategic imperative.

“Lenses.io is a great product that helps companies boost natural Kafka-based streaming capabilities with DataOps best practices.”

Boris Lentini, Account Site & Delivery Director - Grid Dynamics

Goals

To apply these principles, Vladi and his team considered the following architectural drivers.

Their technologies of choice, Apache Kafka, Hadoop and Flink, offered the flexibility and reliability to harness their multitude of data. This architecture evolved into Viseca’s Streaming Data Platform (SDP).

The team also needed visibility into what was happening in their SDP to fulfil their governance goals, giving both technical and non-technical users a way to operate the data, under authentication or authorization control.

Lenses.io supports Viseca’s SDP with the following features, allowing teams to build and operate real-time data flows without the need to learn each individual technology in detail.

RESULTS & LESSONS LEARNED

The secret to success for Viseca’s Streaming Data Platform wasn’t only in identifying the right technologies. They achieved both short-term business results and paved the way for longer-term customer-centric innovation through creating shared data experiences across the organization.

Vladi and his team have made great progress in turning a complex siloed project into an enterprise-wide Streaming Data Platform that delivers measurable business results.

It no longer takes weeks to integrate with data on-demand; it takes fewer than 10 minutes, with <1 second latency.

It continues to transform the introduction of a Streaming Data Platform from a complex siloed project, into an enterprise-wide imperative.

to exchange data

between apps

simple stream processing apps

For Marketing

The introduction of real-time streaming services meant that teams could innovate without constraints, speeding up time-to-market of streaming apps by 10x and sending 600k targeted communications to customers.

For IT

The fast & easy development processing of data pipelines through the Lenses.io toolset meant high adoption rates without the need to build deep internal expertise for every open-source technology - such as Apache Kafka.

Security & governance

Viseca adheres to strict data governance and compliance standards, through the following Lenses.io features:

Development

Not only could developers build and deploy simple apps in minutes, but Lenses.io offered strong support in terms of quality and needed time for bug fixes (data inspection).

“If our engineers had to log into each server to troubleshoot or run instructions from the command line to inspect data, they would have gone crazy. Lenses.io immediately helped by providing a central solution with an intuitive UI for visibility into the whole data environment.”

Vladimiro Borsi, Enterprise IT Architect

Operations

Operations benefited from the information flow of dynamic key performance indicators, integrated alerting to service operators and strong support to incident troubleshooting (data search).