Andrew Stevenson

The best of Kafka Summit 2020

From Kafka COVID cures to shifting gears with your GitOps.

Andrew Stevenson

This year’s Kafka Summit was a first for many, including conference veterans and Confluent themselves. We fumbled around in this virtual world bookmarking and watching the most sought after stories and proven use cases in streaming technology - told by the bright people that build it.

What we found, overwhelmingly, were stories about scaling Kafka in times of unpredictability.

As Jay Kreps mentioned in his keynote, "the easy part is running one application on Kafka. The hardest part is having the whole organization on it." Even then, what about the rest of your data landscape?

Here are some of the top talks that stuck out for us in a sea of useful diagrams, demos and Q&As.

Reducing Total Cost of Ownership, in particular storage costs, has been important for many organizations that want to deliver an economically scalable data platform.

Data warehousing and data stores have been working on this for a long time, with S3 from AWS in particular leading the way in continuously driving down costs. It was only a matter of time before Kafka, increasingly promoting itself as a next-gen database, would have to prioritize reducing storage costs too.

This is a hot topic amongst our customers and was also talked about during Kafka Summit, being called out by Jay in his keynote (check out his talk).

Organizations like Nuuly, for example, "want to focus on building core business capabilities and not spend time or mindshare on Kafka infrastructure.”

Pinterest also briefly talked about their initiatives to reduce the cost of Kafka storage in their session.

Whilst we wait for improvements to Kafka, check out our new S3 connector which allows you to sync data from Kafka to S3 and reduce your long-term storage costs:

https://dzone.com/articles/why-you-should-and-how-to-archive-your-kafka-data

The financial services sector has been one of the fastest to adopt Kafka, with digital natives like Avanza and Riskfuel leading the roost. They’ve gone far beyond initial basement project success with streaming data, saving many hours by making their Kafka data platform self-service and extending adoption across hundreds of technical and business users.

We were interested to see how ING bank did it.

They’ve been in production for +6 years and have grown from 20k to 300k messages per second across 1800 topics.

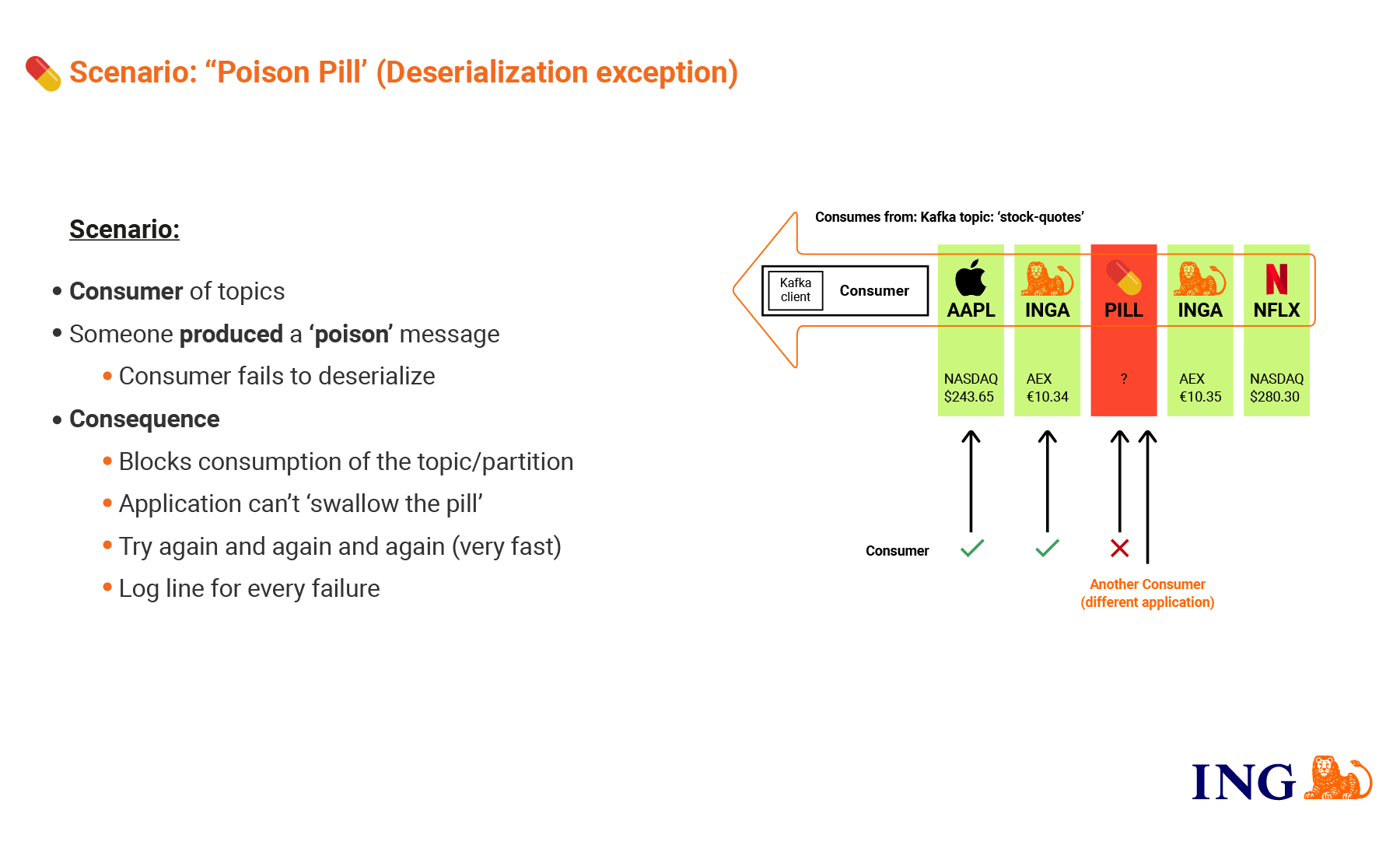

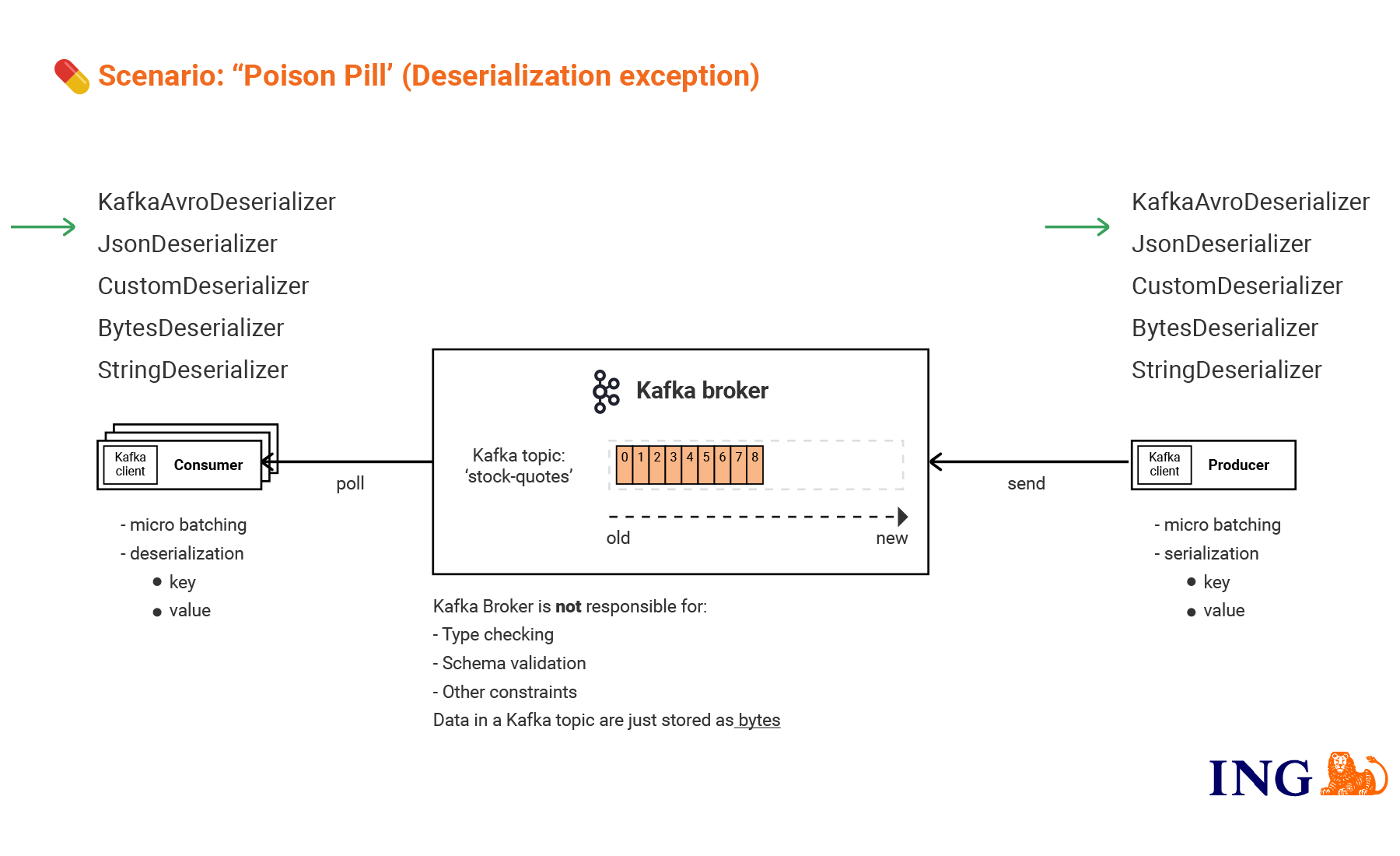

Tim van Baarsen, Software Engineer at ING shares some post-honeymoon learnings from their journey in scaling Kafka, including a ‘poison pill’ scenario to demonstrate how teams can plan for the unexpected on a micro level when it comes to producing and consuming Kafka topics - and avoid catastrophic scenarios down the road.

Their dos & don’ts?

Consuming: Always plan for de-serialization exceptions. Don’t wait until the topic retention period has passed, change the consumer group or programmatically update the offset - instead configure your error-handling and alerting accordingly.

Producing: Do share your secrets securely, but don’t share your serializers

Securing: Ask yourself who can produce data to your topics? Who can consume? How can you keep a record of this which is accessible to the right users and implement data policies to govern data and uphold a central source of truth?

One security incident can bring an entire enterprise to its knees.

Many of us at Lenses have worked in enterprise security and helped to build security data platforms. Security teams tend to work in silos relative to the rest of the organization and have a unique set of requirements when it comes to data analytics. From our experience, they are by far the largest consumer of data in any business: when you never know where a security breach is coming from, you can never have too much data at your disposal to investigate.

Many security vendors provide an end-to-end data collection, storage and analytics technology; Splunk being the leader in the field. But increasingly, organizations need to decouple their data collection from their storage tiers. Reasons include avoiding vendor lock-in, cost savings, and adding the ability to develop data mesh architectures where different best-of-breed solutions can consume from the same data source.

So security teams have been turning to Kafka for the last few years. They find it a great way to consume data from other teams across a business - a means that is often crucial to responding to an incident and monitoring in real-time (see how you can set-up Splunk to receive audits.)

McKesson's Kafka Summit session was a great explanation of how they decoupled their SIEM platform from the data collection with Kafka Connect.

Take a look at this video explaining how you can use Kafka Connect to send data to Splunk: https://www.youtube.com/watch?v=cnKHhE8ApPA.

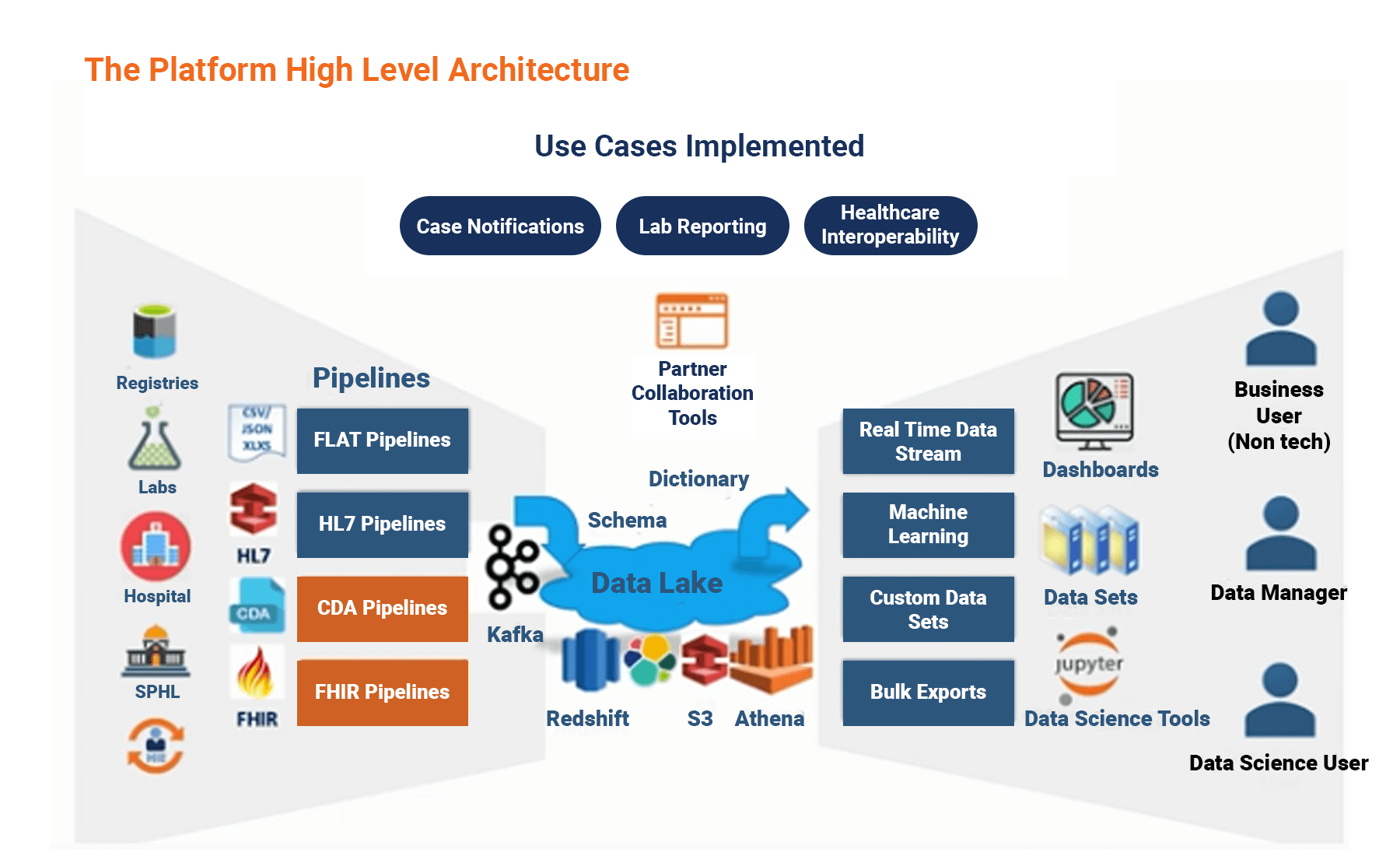

Rishi Tarar, Enterprise Architect explains how the Centers for Disease Control and Prevention CELR (Covid Electronic Lab Reporting) program is using real-time data, Kafka and KStreams to solve the Coronavirus pandemic - the biggest real-world problem of our lifetime.

Collecting all COVID-19 lab data for the whole of the USA isn’t your average business event streaming challenge. This data has to be delivered at the same time, every day, from every state since the beginning of time - noisy data sent through different feeds, that can’t easily be worked into a merge view. And then it needs to reported to the White House! They also had to build this architecture as quickly as possible to record and report testing data back to federal agencies.

What can we learn from the CELR in terms of structuring Apache Kafka for rapid response, reliability and predictability?

Architect for transition: That future state - when you’ll need a transition plan in place for those new streams - doesn’t exist. Transition is the state. This makes an event-driven architecture fundamental for solving real-world challenges.

Frame events in their respective contexts: The CELR organizes their pipelines by program and pathogen. But these events require a richer context to disseminate value - not just describing the events themselves, but the events that led to them.

Here metadata, raw events and the ability to catalog data to quickly track provenance become essential in understanding how those events unfold.

Make infrastructure and events visible: You can’t solve what you can’t see. Rishi places importance on observable events as data products so that streaming platform users and stakeholders can react to what’s happening in front of them, from software engineers to the White House task force.

Deploying Kafka is a well-trodden path. But you are burning dollars until you put your application landscape on top.

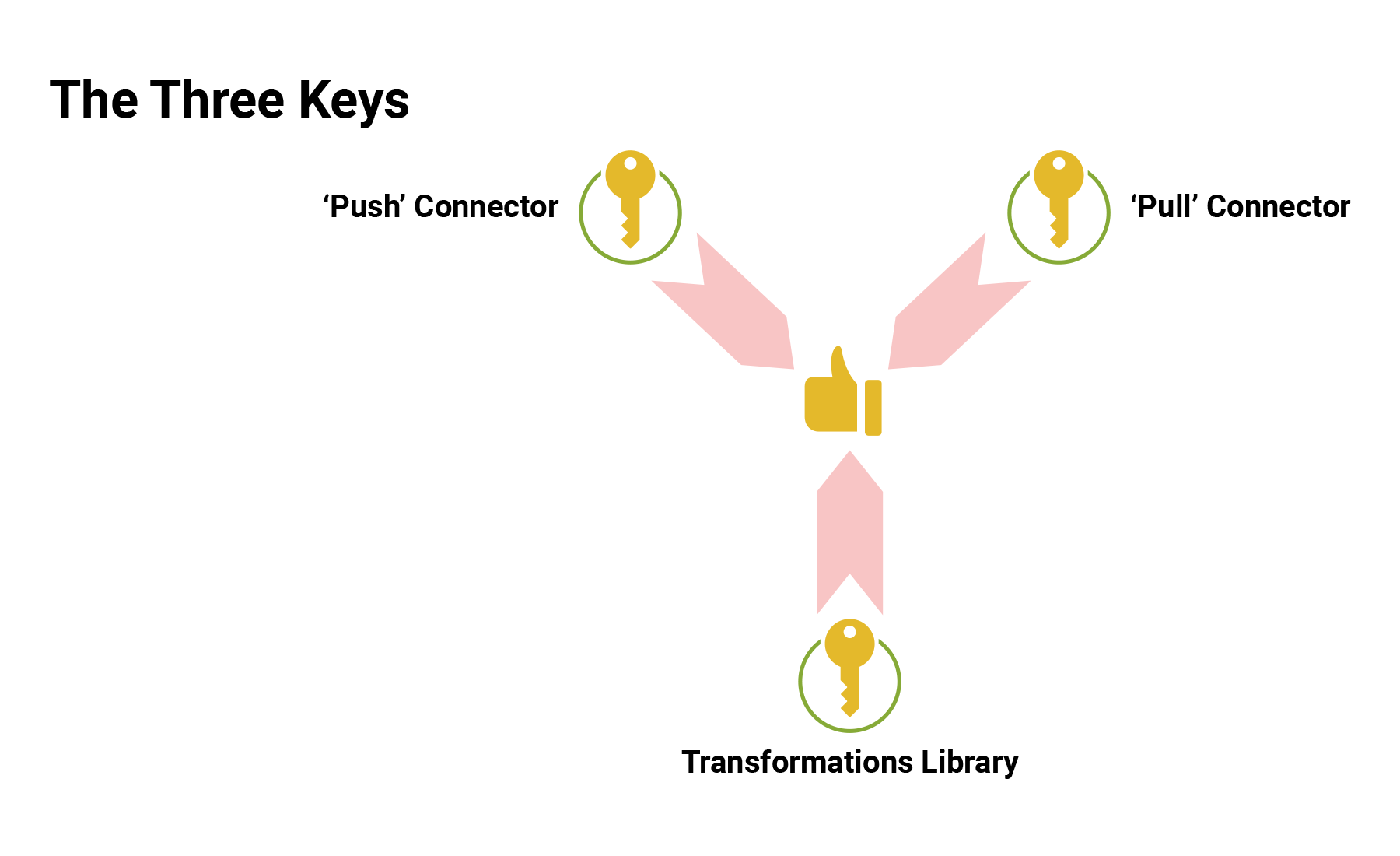

To run a successful data platform, you need two key ingredients - automation and governance. GitOps delivers both by allowing you to define your entire Kafka environment as configuration and govern it with Git. But there are different ways of automating deployment across Kafka and Kubernetes: push and pull. Spiros, our Head of Cloud and I walk you through the benefits of different approaches, sharing learnings from our time spent working with a spectrum of young tech unicorns and mature global enterprises.

We do a live demonstration of a new Lenses Operator for Git that continuously monitors a git branch and deploys changes over your Apache Kafka and Kubernetes environments.

Watch our full Kafka Summit talk and demo here (we walk through the same topic with DevOps.com). If you'd like to try out Git and DataOps on your own Kafka cluster, you can spin up a Lenses workspace in the cloud, on-prem or as an all-in-one instance of Kafka.