Andrew Stevenson

Don’t trust Kafka Connect with your secrets

Why and how you should be securing your Kafka Connect connector passwords with Azure KeyVault

Andrew Stevenson

Open source is great but sometimes it misses the mark for security at enterprise levels. Take Kafka Connect, I’ve built a few connectors in my time and prior to its introduction to Apache Kafka back in 2017 I used other hand cranked pieces of software and security was always a primary concern.

One feature that will quickly put a blocker on your project being successful is not reaching production. There’s a number of reasons for this but high up, usually top, is security.

I’ve spoken with a number of our clients who say that unless it passes the security review you are going nowhere, regardless of how cool your technology is. I experienced this at tier 1 investment banks: passwords in JDBC connections do not fly!

Connect can handle this by making sure connectors have sensible configurations and use the Password type provided by the ConfigDef class Kafka provides. This masks passwords, stops them being displayed in logs and doesn’t expose them as strings in memory. This all helps reduce the risk of a leak.

All great, but Connect comes with a set of APIs that will still happily return the plaintext sensitive data in the calls to get a connectors configuration. Additionally, if you are practising GitOps, and you should be, you can leak sensitive data via your application configuration.

Back in August 2018, Kafka introduced External secret providers. It’s a great feature. This allows you to provide indirect references in a connectors configuration that can be resolved at runtime. Apache Kafka provides a File Config Provider, this allows you to use a separate file to store the secrets. Connector configurations can then reference this file to resolve the secrets.

The File Config Provider is great. It works. But you still need to somehow write and manage these secret files and they are still sitting there on disk.

At Lenses.io, we thought we could do better. I have often said there’s more to your data platform than just Kafka. You also need the various different data sources and sinks, a place to deploy your application landscape (the important bit) and somewhere to secure your secrets. This is why Azure is a great choice as they both provide all these services and a managed Apache Kafka service to boot.

We can leverage the cloud to build data intensity by using commoditized technology.

To further deepen Lenses.io cloud integrations we created an External secrets provider for AWS Secret Manager, Azure KeyVault and Hashicorp Vault. It's open source, Apache 2.0 licensed and available here on github.

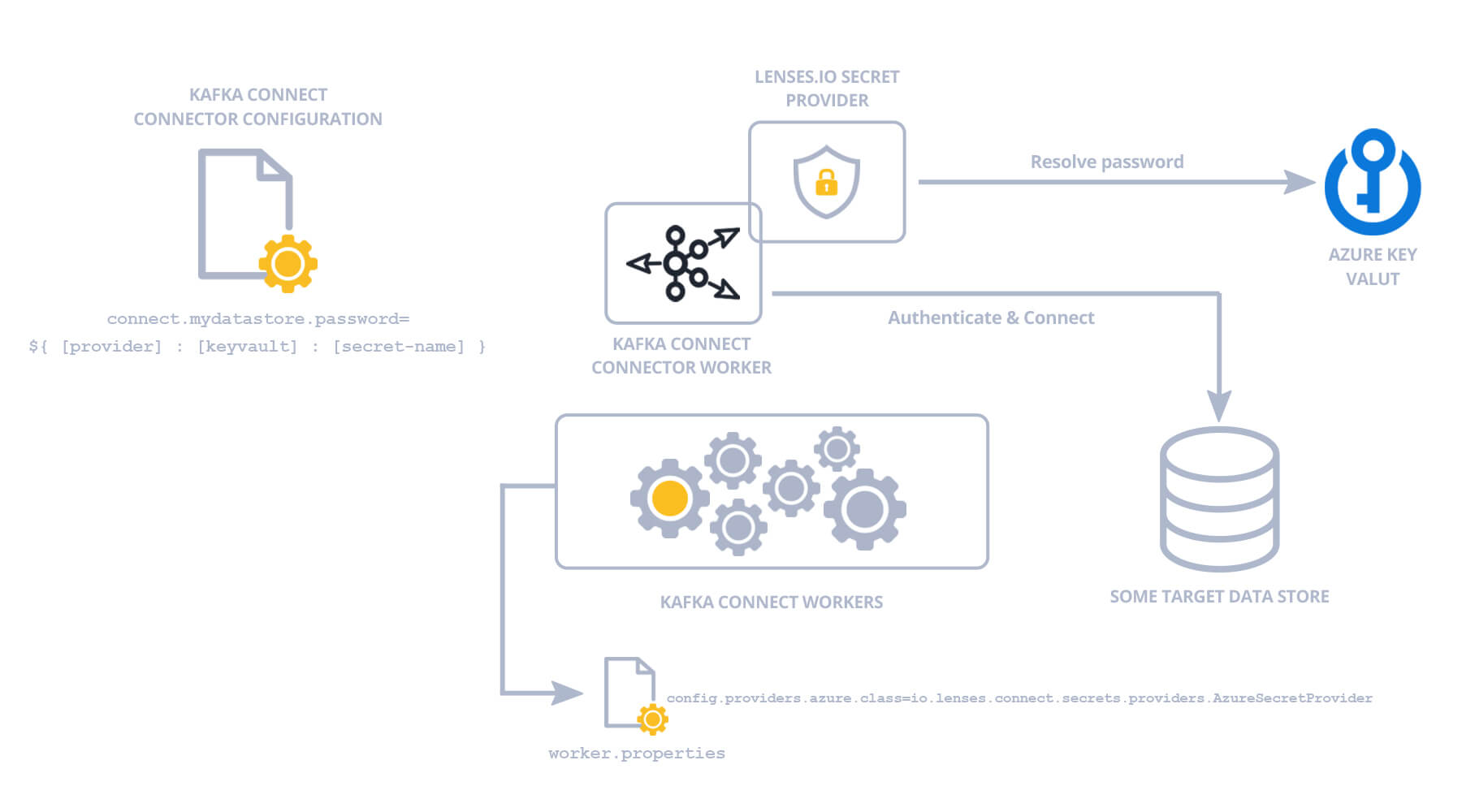

Let’s have a look at how it works with Azure KeyVault and HDInsight, but the principle is the same regardless of the secret provider.

In order for the secret provider plugins to work they need to be made available to the Connect workers in the cluster. We also need to configure them. The recommended way to add plugins to Connect is use the “plugins.path” configuration and place the jars in a separate folder under this path. This provides classloader isolation, however, for Azure, we need to add the jar to the classpath since the Azure SDK makes use of a service loader and uses the default system classloader so it won’t find an implementation of the HTTPClient it needs.

In the worker properties file add the following, substituting your Azure service principals that have the access to the KeyVault holding your secrets.

What this does is tell Connect that we want to use a configuration provider, and that we want an Azure Secret Provider to be installed (azure) and the name of the class to instantiate for this provider and its properties. The Azure Secret Provider can be configured to either use Azure Managed Service Identity to connect to a KeyVault or a set of credentials for an Azure Service Principal.

Now we can start our worker as usual. If you inspect the logs you will see plugin initialise. You should see something like this:

INFO AzureProviderConfig values:

Great, now the worker is up, with the plugin installed and we can submit a connector that has reference to a secret in a keyvault.

If I want to create an instance of the Stream Reactor Cassandra Connector without the plugin I need to set a configuration like this:

Notice the password in plaintext and exposed. Not good. With the plugin install we can provide an indirect reference for the Connect worker to resolve at runtime.

For Azure, this takes the form of:

${[provider]:[keyvault]:[secret-name]}

Where [provider] is the name we set for the Azure Secret Provider in the worker properties (azure).

The [keyvault] part is the url of the Keyvault in Azure without the “ https://” protocol. This is because Connect uses “:” as a separator.

The [secret-name] is the name of the secret that holds the value we want in the keyvault.

If I have a keyvault called “lenses” in Azure and a secret called cassandra-password stored in this Keyvault, the value I would set when submitting the Connector would be:

connect.cassandra.password=${azure:lenses.vault.azure.net:cassandra-password}

Once submitted the Worker will resolve the value of the cassandra-password secret stored in the KeyVault and make it available to the tasks so they can securely connect.

The plugins also have the ability to handle base64 values and also secret files to disk if required. For example, a connector may require a pem file. This can be stored securely in the KeyVault and downloaded for use in the connector. Care must be taken to secure the directory these secrets are stored on.

The Azure Secret Provider uses the “file-encoding” tag to determine this behaviour. The value for this tag can be:

* UTF8

* UTF_FILE

* BASE64

* BASE64_FILE

The UTF8 means the value returned is the string retrieved for the secret key.

The BASE64 means the value returned is the base64 decoded string retrieved for the secret key.

If the value for the tag is UTF8_FILE the string contents are written to a file. The returned value from the connector configuration key will be the location of the file. The file location is determined by the “file.dir” configuration option given to the provider via the worker properties file.

If the value for the tag is BASE64_FILE the contents are base64 decoded and written to a file. The returned value from the connector configuration key will be the location of the file. For example, if a connector needs a PEM file on disk, set this as the prefix as BASE64_FILE. The file location is determined by the “file.dir” configuration option given to the provider via the Connect worker file.

If no tag is found the contents of the secret string are returned.

Ready to start managing your secrets with Azure KeyVault? Here’s the tutorial.