Alex Durham

Will your streaming data platform disturb your holiday?

Platform engineers, here's why you need to double down on DataOps before vacation.

Alex Durham

Here's why you need to double down on your DataOps before your vacation.

In the past few months, everything has changed at work (or at home).

Q1 plans were scrapped. Reset buttons were smashed. It was all about cost-cutting and keeping lights on. Many app and data teams sought quick solutions and developed workarounds to data challenges and operational problems as people prepared to work from home for the foreseeable future.

And now, it’s time for a holiday.

So how do you make sure the right real-time data is accessible to the data literate, not only the technology literate?

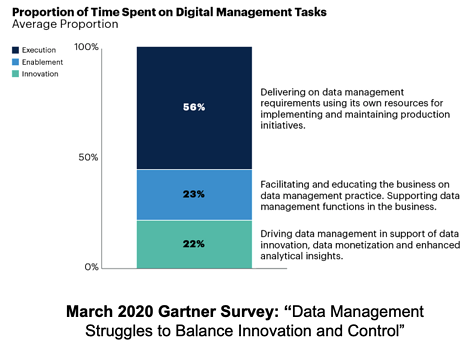

If your internal customers can operate the data platform themselves (i.e. they can access data, deploy apps, manage schemas etc.) there’s less chance they will bother you just as you’re about to catch some rays. This way, their data projects can continue in your absence. According to Gartner, only 22% of a data team’s time is spent on new initiatives and innovation. As a result, many data teams aren’t meeting expectations - or worse yet, feel beaten down and dis-empowered.

Focus on introducing the machine that helps make the machine and provide self-service for users.

An overview of performance metrics like broker, producer and consumer health obviously helps you. But you should also make this available to your co-workers so they can identify, anticipate and fix soul-sucking errors independently, whilst you’re busy drinking a pina colada on your balcony.

Metadata rules. If you're able to collect it from different data infrastructure and applications - you're on the road to success (and a peaceful staycation). Allow your customers to answer questions like:

What data exists and its profile?

What is its quality?

What service levels can I expect?

What is its data provenance?

How might other services be impacted?

How compliant is it?

Gartner agrees:

“By 2021, organizations that offer a curated catalog of internal and external data to diverse users will realize twice the business value from their data and analytics investments than those that do not.”

- Augmented Data Catalogs: Now an Enterprise Must-Have for Data and Analytics Leaders,” Ehtisham Zaidi & Guido de Simoni, Sept.12, 2019

Much like speaking or writing, different languages are the biggest barrier to communicating data. Transforming, testing, deploying and documenting data shouldn’t require an intimate knowledge of Snowflake, MongoDB, Apache Kafka, Elasticsearch and Kubernetes. As the data landscape continues to proliferate, no one can be an expert on everything. Even you.

Decoupling data from the underlying infrastructure before you go on holiday sounds painful.

But it really just involves finding a common denominator, and a common language: Data and SQL.

Making your data technologies and analytics capabilities navigable in SQL puts a world of events at the fingertips of anyone that speaks data.

It unlocks technical data analytics practices, capabilities and cultural norms across your teams as they work remotely, whether they can reach you on Slack or not.

Speaking of Slack (not to mention DataDog, Prometheus Alert Manager, PagerDuty, AWS Cloudwatch) - make life easier for yourself by setting up alert configurations with your teams and internal customer’s (team’s) favorite tools directly. This cuts you out of the picture completely to focus on your break - or engineering the next big thing.

Unfortunately, data governance is still considered by many wannabe “data-driven” companies as a set of support function policies executed by IT and not widely followed. This not only makes data initiatives difficult to scale, it makes a vacation handover of your most strategic streaming applications stressful.

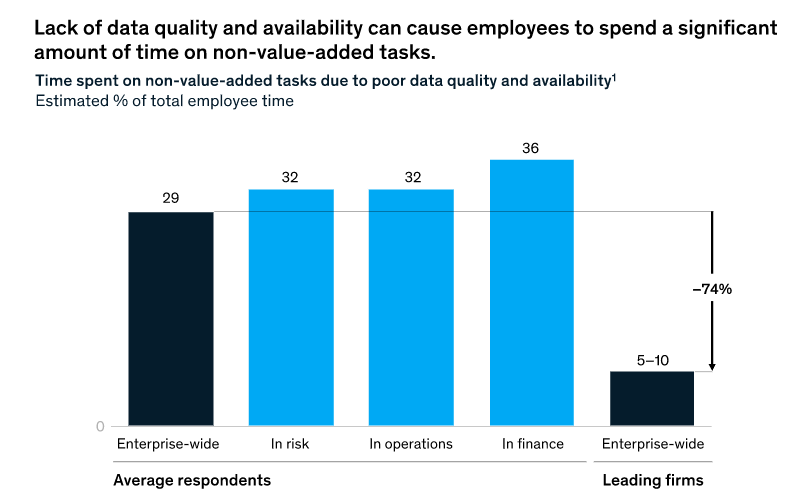

According to McKinsey:

By addressing the previous points, you’re already shifting data governance towards product teams, and integrating data at the point of production and consumption.

Begin by putting some simple checks and balances in place to create a hierarchy of data, and introducing policies to control who can access what. You can prioritize the cataloguing of data and the auditing of user activities based on the level of transformational effort required and the level of regulatory risk. You can even get a head start with our Real-Time Data Catalog.

A large scope means relatively slow progress, but this approach allows you to create a roadmap of incremental change across teams rather than passing over all data assets at once.

It means that you are no longer the bottleneck: not every critical data path leads directly to you anymore.

Clear measurement and monitoring of real-time data is on someone else’s desk, and you’re finally free to log off, close your laptop and catch some rays.

Enjoy.