Connect Kafka to MongoDB

Simplify and speed up your Kafka to MongoDB sink with a Kafka compatible connector via Lenses UI/ CLI, Native plugin or Helm charts for Kubernetes deployments

About the MongoDB to Kafka connector

License Apache 2.0

The Mongo Sink allows you to write events from Kafka to MongoDB.

The connector converts the value from the Kafka Connect SinkRecords to a MongoDB Document and will do an insert or upsert depending on the configuration you choose.

The database should be created upfront; the targeted MongoDB collections will be created if they don’t exist. This is an open-source project and so isn't available with Lenses support SLAs.

Connector options for Kafka to MongoDB sink

Docker to test the connector

Test in our pre-configured Lenses & Kafka sandbox packed with connectors

Use Lenses with your Kafka

Manage the connector in Lenses against your Kafka and data.

Or Kafka to MongoDB GitHub Connector

Download the connector the usual way from GitHub

Connector benefits

- Flexible Deployment

- Powerful Integration Syntax

- Monitoring & Alerting

- Integrate with your GitOps

Why use Lenses.io to sink Kafka to MongoDB?

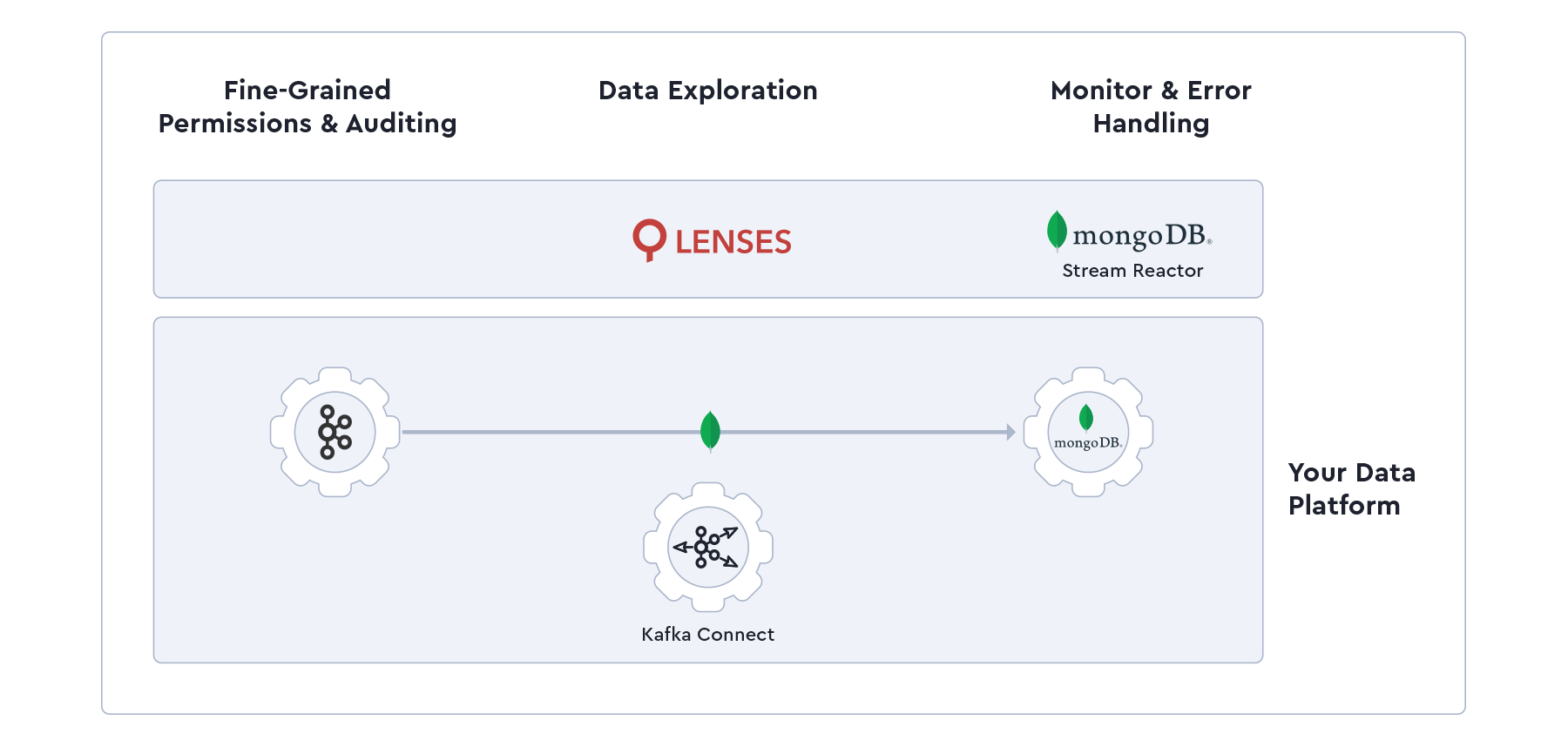

This connector saves you from learning terminal commands and endless back-and-forths sinking to MongoDB from Kafka by managing the MongoDB stream reactor connector (and all your other connectors on your Kafka Connect Cluster) through Lenses.io, which lets you freely monitor, process and deploy data with the following features:

- Error handling

- Fine-grained permissions

- Data observability

How to push data from Kafka to MongoDB

- Launch the stack by copying the docker-compose file

- Prepare the target system by creating the MongoDB container

- Start the connector by logging into Lenses.io, navigating to the Connectors page and selecting MongoDB as the sink