Antonios Chalkiopoulos

Kafka security via data encryption, end to end data security

End-to-End data security

Antonios Chalkiopoulos

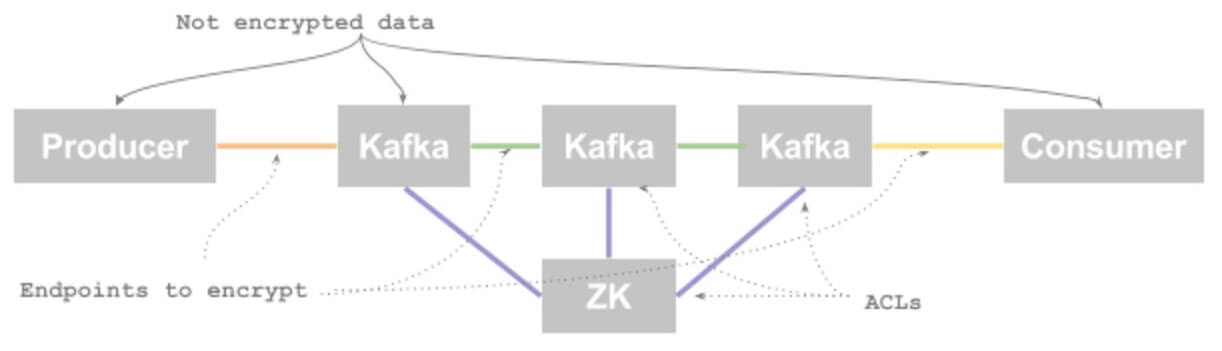

When it comes to security, Apache Kafka, as every other distributed system, provides the mechanisms to transfer data securely across the components being involved. Depending on your set up, this might involve different services such as Kerberos, relying on multiple TLS certificates and advanced ACL setup in brokers and Zookeeper. In many cases, with encryption features enabled, performance is also taking a penalty hit.

Even though a secure network infrastructure ensures the data encryption in-transit, what happens when your data is at-rest or after it leaves the cluster?

Some use cases dealing with sensitive or critical data may require the data or even some of the fields to be encrypted as well, or pseudo-anonymization to be applied and data to be protected when distributed or stored to any system. Usually, this comes under projects that need to be compliant with different regulations like GDPR, HIPAA, HITECH and PCI.

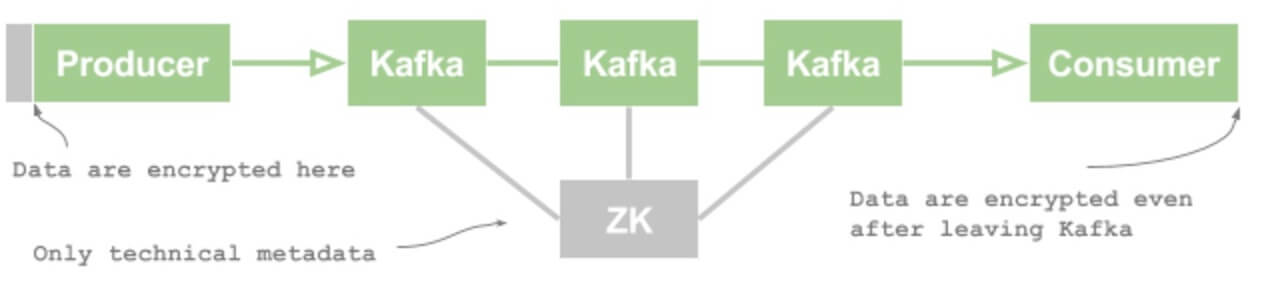

An end to end data encryption approach will ensure that our data is secure in-transit, at-rest, in-usage and even after leaving the Kafka cluster.

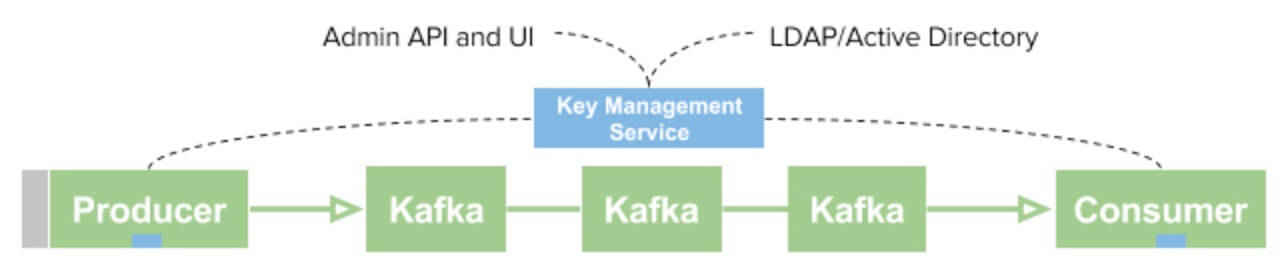

In a simplistic implementation, a pair of public/private encryption keys can be used for encrypting the data on the producer side and provide the decryption keys to ‘trusted’ consumers only. With this approach, we are managing to encrypt data end-to-end so that we can have the additional security guarantees. Ultimately this solution generates a problem, which is how to manage all these keys. For this reason, we would require to introduce a key management service.

A Key Management service can provide facilities for common operation, actions and security policies like revoking keys, rotating keys on a daily basis and managing key distribution to the consumers. At the end of the day, we want the producer to encrypt once, and via a delegation service to have multiple consumers with different access levels to that data.

In order to integrate the producer and consumer applications, Kafka interceptors is a considerable option here. Kafka interceptors is a pluggable mechanism for producers and consumers that we can use to plug in libraries (with encryption algorithms and key-management integration) via a configuration change to existing JVM applications, without any additional development work.

The above paradigm extends to Kafka Connect and KStreams as well, where, for example, an encrypted message can be partially decrypted before being stored in an external system like Cassandra, while leaving the Credit card information still encrypted at a different access level. Such policy based encryption with field-level granularity works best with Avro or JSON (structured messages).

With SSL/TLS enabled on the Kafka brokers, the page-cache direct-copy optimization cannot be leveraged. Data has to be transferred from the socket (network interface) to the CPU to be encrypted before sent to the disk, and vice versa. This puts additional pressure to the CPU making Kafka operating at slower throughputs.

By delegating the responsibility of encrypting and decrypting messages to the producers or consumers, this performance penalty is applied once and does not affect the overall performance of your Kafka cluster.

If your organization cares about i) data sharing ii) cloud enablement iii) data migration or iv) compliance and data governance then encryption at the data layer is the additional level that will ensure that your data is just an asset and not a liability.

NuCypher is a cryptographically-enforced access control system based on proxy re-encryption. Currently the technology is mostly used in Finance, Insurance and Health Care and is forecasted that it will soon be popular among other industries as well.